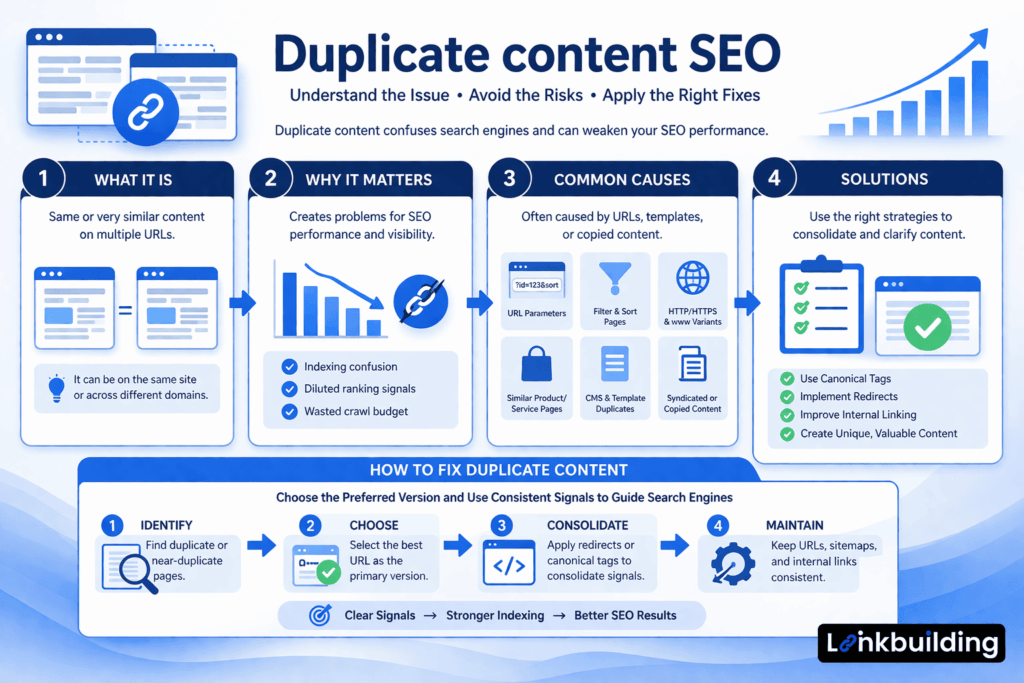

Duplicate Content SEO: What It Really Means and How to Handle It Correctly

Duplicate content is one of the most over-discussed and misunderstood topics in SEO. Many site owners hear the term and immediately worry about penalties, lost rankings, or being filtered out of search entirely. In practice, the issue is usually less dramatic and more structural.

That does not mean it is unimportant. Duplicate content can create indexing confusion, weaken URL consolidation, dilute internal relevance, and make it harder for search engines to understand which page should rank. On larger websites, it often becomes a signal that the technical setup needs closer attention.

That is why duplicate content SEO matters. The goal is not to panic every time similar content appears across URLs. The goal is to understand when duplication is normal, when it becomes a problem, and how to manage it without creating more technical complexity than necessary.

This article explains what duplicate content means in SEO, why it matters, how it works, what commonly causes it, and how to handle it strategically.

What Is Duplicate Content SEO?

Duplicate content SEO refers to how search engines interpret and respond to content that appears in identical or near-identical form across multiple URLs.

In practical terms, duplicate content exists when search engines can access two or more pages that contain the same or substantially similar content. Those pages may be on the same website or across different domains.

This does not automatically mean there is a penalty. In most cases, the problem is not punishment. The problem is ambiguity.

If search engines see multiple versions of the same content, they need to decide:

- which version should be indexed

- which version should rank

- which version should receive consolidated signals

When that decision is unclear, organic performance can become less efficient.

Common duplicate content SEO situations include:

- tracking or sorting parameters

- filtered category pages

- printer-friendly versions

- HTTP and HTTPS duplicates

- trailing slash inconsistencies

- www and non-www variants

- repeated product descriptions

- syndicated content across websites

- similar location or service pages with minimal differentiation

Why Duplicate Content Matters

Duplicate content matters because search engines want clear signals. When several URLs compete to represent the same or very similar content, that clarity weakens.

It creates indexing confusion

Search engines may crawl several versions of the same page and decide to index only one. If the wrong version gets selected, the page you actually want to rank may receive less visibility.

It can dilute ranking signals

If internal links, backlinks, or crawl attention are spread across several duplicate versions, the main page may not receive the strongest consolidated signal.

It wastes crawl attention

On larger sites, duplicate URLs can consume crawl resources that would be better spent on important or newly updated content. This becomes more relevant on ecommerce, publishing, and faceted navigation setups.

It often signals deeper technical problems

Duplicate content SEO issues are frequently symptoms of something else: weak URL structure, poor canonicalization, messy CMS behavior, or inconsistent internal linking. Fixing duplication often improves broader technical SEO at the same time.

How Duplicate Content SEO Works

Duplicate content SEO is less about penalties and more about search engine selection.

When search engines encounter multiple similar URLs, they usually try to cluster those pages together and choose one as the most representative version. That chosen page may then receive the main indexing and ranking treatment.

The challenge is that search engines do not always choose the version you want.

That is why duplicate content SEO relies on stronger supporting signals such as:

- canonical tags

- redirects

- consistent internal linking

- XML sitemaps

- clean URL structure

- stable indexation policies

The clearer those signals are, the easier it is for search engines to understand the preferred version.

Common Causes of Duplicate Content

URL parameters

Tracking codes, session parameters, sorting options, and filtered navigation often create alternate URLs that display the same core content. This is one of the most common causes of duplicate content on otherwise well-built sites.

CMS and template behavior

Some CMS platforms generate duplicates through archive pages, attachment URLs, tag pages, search results, or alternate paths to the same content. Left unmanaged, these variations can quietly expand over time.

Protocol and hostname inconsistencies

If HTTP and HTTPS versions are both accessible, or both www and non-www versions remain live, the site may create clear duplication across the domain level.

Similar product or category pages

Ecommerce sites often reuse product copy, category descriptions, or filter variants. Some overlap is unavoidable, but too much repetition across URLs weakens clarity.

Reused local or service pages

Many businesses create location or service pages by changing only the city or service name while leaving the rest of the content largely identical. Search engines often treat those pages as low-value duplicates unless they are meaningfully differentiated.

Syndicated or copied content

Content republished across multiple domains can also create duplicate content SEO challenges. Search engines need help understanding which version is original or preferred.

Duplicate Content vs Thin Content

Duplicate content and thin content are related but not identical.

Duplicate content means two or more pages are too similar to each other. Thin content means a page lacks enough value or substance on its own.

A page can be unique and still be thin. It can also be detailed and still be duplicated across multiple URLs.

This distinction matters because the solution depends on the problem. Duplicate content usually needs consolidation or stronger canonical signals. Thin content usually needs better content quality or a different indexation decision.

Common Duplicate Content SEO Mistakes

Assuming every duplicate is a penalty issue

This is one of the biggest misconceptions. Search engines do not usually treat duplicate content as a sitewide punishment event. The bigger issue is that they may not rank or index the version you want.

Canonicalizing pages that should actually be redirected

A canonical tag is useful when multiple versions need to remain accessible. But if a duplicate page should no longer exist for users, a redirect is often cleaner.

Blocking duplicates in robots.txt too early

If a page is blocked before search engines can crawl it properly, they may never see the canonical tag or other signals. This can make duplicate content management less effective.

Letting internal links point to multiple versions

If the same content is accessible through different URLs, and internal links use several versions inconsistently, the site weakens its own preferred signal.

Creating too many near-identical pages

This often happens with location, service, or product variations. Pages that are only superficially different may not give search engines a reason to treat them as distinct assets.

Practical Guidance for Fixing Duplicate Content

The best way to handle duplicate content SEO is to start with the source of duplication, not just the symptom.

Identify the duplication pattern

Look for repeated problems at the template or system level. Are duplicates coming from parameters, filtered navigation, alternate paths, or weak CMS settings? One-off page fixes rarely solve a pattern-driven issue.

Decide which version should be primary

Before applying canonical tags or redirects, decide which URL should be the preferred version. That page should be indexable, internally linked, and aligned with your long-term structure.

Use the right technical control

The correct fix depends on the situation:

- use redirects when old or duplicate URLs should no longer exist

- use canonical tags when alternate versions need to stay live

- use noindex where pages should remain accessible but not appear in search

- improve internal linking so the preferred version is reinforced consistently

Clean up supporting signals

A canonical tag alone is not enough if the sitemap, internal links, and redirects all point elsewhere. Good duplicate content SEO depends on consistency.

Improve genuinely overlapping content

If several pages are similar because they do not offer enough unique value, the solution may be content differentiation rather than technical consolidation alone.

Related Topics That Support Duplicate Content SEO

Duplicate content rarely exists in isolation. It connects directly to several other technical SEO topics, especially:

- canonical tag implementation

- URL structure SEO

- crawling and indexing

- robots.txt SEO

- XML sitemap SEO

- internal linking

- technical SEO audits

In a cluster structure, this page can naturally support or be supported by those topics without turning into a general technical SEO guide.

Timing and Expectations

Duplicate content fixes can affect crawling and indexing relatively quickly when the problem is obvious and the technical signals are aligned. Redirects, canonical corrections, and indexation cleanup may help search engines settle on the preferred version more efficiently after recrawling.

But it is important to stay realistic. Duplicate content SEO fixes do not create better rankings out of thin air. They remove ambiguity. That helps strong pages perform closer to their actual potential.

If the main page is still weak, poorly targeted, or low in authority, cleaning up duplicates will not solve those larger SEO issues by itself.

Conclusion

Duplicate content SEO is less about punishment and more about clarity.

Search engines can handle similar content, but they still need help understanding which version of a page should be indexed, ranked, and treated as primary. When that guidance is missing, performance can become fragmented and inefficient.

The most effective approach is not to fear duplication in the abstract. It is to manage it with clear technical signals, stronger structure, and better content decisions. That means choosing the right preferred URLs, consolidating overlapping versions, and making sure the rest of the site supports those choices.

For websites that want sustainable organic growth, that kind of clarity matters. Duplicate content does not always create a dramatic SEO problem, but unresolved ambiguity rarely helps a site perform at its best.